Introduction (X-45)

forecasting fundamentally changes whenever we try to predict a very rare event. We must fundamentally shift what we are modelling to focus on tail events. From model performance metrics and target definition to the tail model and the transformer output heads, rare-event forecasting is difficult. Difficult yet worth it.

The Halloween storms of 2003 began as a disturbance on the Sun, a single dark spot that created one of the strongest space weather events of the satellite era. Through late October to early November, a series of enormous active regions churned across the solar disk. This released powerful flares and clouds of magnetized plasma towards Earth. This event presented a uniquely aesthetic flair-up with radio-wave implications.

Satellites malfunctioned, GPS and radio were disrupted, and airlines rerouted polar flights. According to NOAA, power grids worldwide were affected, with some currents exceeding 100 amps, leading to the Malmö Blackout in Sweden. At 20:07 UT, a power outage hit the region, leaving approximately 50,000 customers without electricity for 20 to 50 minutes.

Image credit: NASA / Solar Dynamics Observatory (SDO) / AIA. Public domain

An international shock, the event saturated GOES X-ray sensors, so the true size of the flare could be calculated only through reconstruction. Often called X-45, after its Magnitude, 450 times larger than M-1, a medium flare. The table below shows the Flare Richter Scale.

The Prediction Problem

A paradoxical problem with catastrophes is that the more catastrophic they are, the rarer they tend to be. Think floods, snow-storms and avalanches. Every 50-year story happens once in fifty years. This is usually a good thing, but because of their rarity, they become incredibly hard to predict.

There are several things that make predicting rare-events a particularly interesting challenge in machine learning:

- Our metrics for model evaluation must change

- Features need to be engineered from magnetism data

- Make a tail model to specifically capture rare events

- Combine the tail model with the full distribution model using a transformer

A note on accuracy, which is typically a good metric for binary classification. We could achieve 99% accuracy by missing every single solar flare in 10,000 forecasts if we had only 100 major flares. We could simply guess. It won’t happen every single time.

Accuracy = (10,000-100)/10,000 = 9900/10,000 = 0.99 = 99%

True Positives = 0

The Data

If you’re interested in where this data comes from, all the data we have on solar flares comes from an altogether different layer of the sun than where the flare occurs. The data we have on solar flares comes from the Photosphere, the sun’s first visible layer.

Flares occur in the Corona and Chromosphere. The data is collected by the Solar Dynamics Observatory (SDO), a NASA spacecraft that continuously observes the Sun to monitor its activity. Using the Helioseismic and Magnetic Imager (HMI).

Model Input

Fortunately, thanks to NASA, our satellite’s construction, deployment, and voyage to the Sun have already been completed, and we can now focus on our model input. A vector magnetogram estimates the magnetic field vector B. First observations come in two flavours:

From this starting point, the Space Weather HMI Active Region Patch does two things:

- Localization

- Feature engineering

means selecting active regions on the Sun (Localization) and computing magnetic parameters that better describe the solar and magnetic structure (feature engineering).

The important lesson here is that, to address how rare the event we are trying to predict is, we focus on gathering data from locations where it is most likely to happen. We take our starting measurement data on the magnetic fields and compute different features like:

Our input data become a function of time and engineered features:

If our model uses the past 24 hours, and 9 engineered features our input would be

Model Target

We might as well make our target more precise now. We define it as the probability of observing an M-1 class event in the next 24 hours, given the magnetic history. Here, the magnetic history would be our entire input data.

But there are many implicit design decisions we’ve made that the following table makes explicit.

Notice that there are many options when constructing our target. This is a major problem when comparing different models. It’s worth noting that simply taking more data is not better, as events that happened further in the past tend to be less powerful predictors of future events. This introduces a noise-to-signal problem with regards to your training window.

The Metric TSS

To solve the problem presented earlier of having a model with 99% accuracy and zero recall, we introduce a new statistic called the True Skill Statistic (TSS), defined as the difference between the true positive rate and the false positive rate. TSS rewards true positives while also punishing false positives.

Making a tail model

Because of flare rarity, if we use the following risk objective, we will find that common events, where no solar flare was present, dominate the loss term. Rare events barely contribute, as they happen so little, even though they are the most relevant to what we are trying to predict. The model can become very good at the bulk of the distribution while learning very little about the extreme events, which we are interested in. This is why it makes sense to consider tailoring.

We can more accurately describe the problem by saying that our objective is frequency-weighted, meaning that frequent events dominate the loss term, while less frequent (rare) events contribute the least, even though that is what our model needs to learn.

So our model can learn from mostly rare events. We choose a constant threshold for a continuous variable, such as soft X-ray flux, anything that measures flare severity could work. We set our target to the difference between the threshold and our observed flare-severity variable, and use only data from the tail of the distribution.

Then the data we model is:

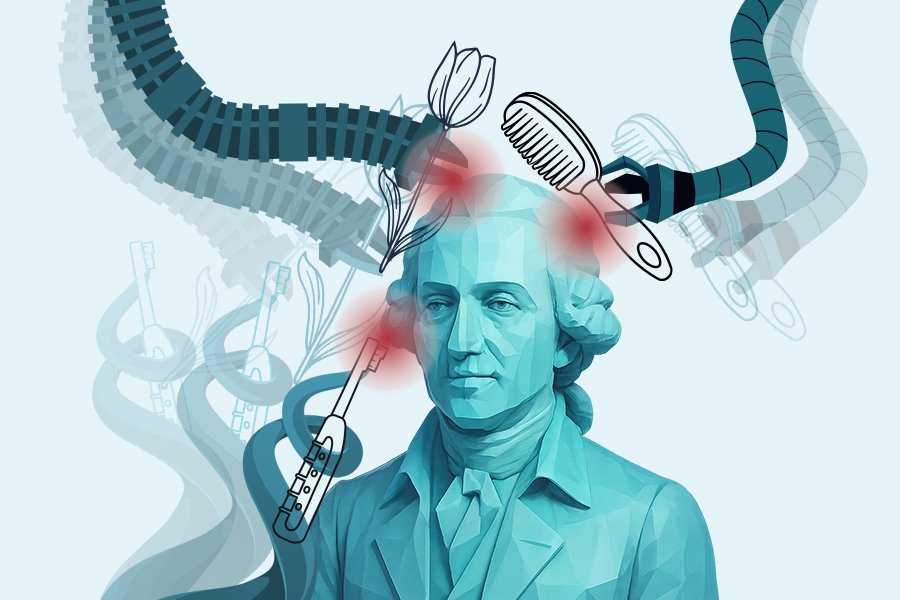

Using Transformers

We can now combine our original model and tail model using a transformer to achieve a more robust solution, which ideally learns what happens both below the threshold for a rare event and above it. In other words, we would like the model to learn the origin of the discrete function as well as the shape of excess risk defined by the tail model. For this, we can use transformers with different heads. A model can begin with magnetic history data and encode it into a representation h; separate heads can estimate different quantities like flare probability, uncertainty tail exceedance and precursor signal.

The classification head, which estimates the probability that our target is one given our data, is often trained with the binary cross-entropy, perhaps weighted to account for class imbalance.

We can use the Generalized Pareto Distribution (GPD), which provides a compact model for the excesses (our tail distribution). Here, σ controls the scale, and ξ controls the tail heaviness. The transformer produces a representation of the recent solar states h maps that representation into GPD parameters, so different magnetic histories imply different tail distributions for one active region (sunspot).

The full objective combines two forecasting tasks. The classification term teaches the model to estimate whether a flare crosses the chosen threshold, while the tail term teaches it what the excess severity looks like after that threshold has been crossed. This matters because the model should not only learn “flare or no flare.” It should also learn how large the event might be once it enters the dangerous part of the distribution.

NASA, Sunspots 1302 Sep 2011 by NASA.jpg, September 24, 2011, via Wikimedia Commons. Public domain

Conclusion

When it comes to getting a good forecast for a very rare event using a transformer, it’s not enough to just plug in the data and minimize the loss function. When it comes to predicting solar flares, localization and feature engineering techniques must first be applied to our data. Then we need to specify a model target that can distinguish between positive and negative events. We have to choose an appropriate metric that both rewards true positives and penalizes false positives. Also, because of the huge class imbalance, it makes sense to make a tail model which uses the generalized Pareto distribution to model exceedances beyond a threshold. These techniques and loss functions can be used as different heads of a transformer that is capable of both prediction and estimation, and also learns how large an event might be once it enters a dangerous part of a distribution. What we get from this is improved predictive performance and a better-specified model.

Website | LinkedIn | GitHub